Behavior management #7: Analyzing functions of behavior

Why and how could we test functions of behavior?

As I argued in a previous post in this series, assessing the function of a problem behavior is critical in understanding the causes of problems. Identifying the functions of behavior allows us to understand its causes and to change environments to change the behavior.

In the early days of behavior management, educators and other caregivers usually addressed problem behaviors by providing powerful consequences (punishment or reinforcement or a combination of them) and that was it. Once we learned that we could assess the functions of problem behaviors, identifying the conditions under which it occurred, we could develop behavior intervention plans (BIPs) that were tailored to the unique needs of individuals. (Sounds like special education to me!)

To understand the causes of students’ misbehavior, we do not need to seek explanations that are echoes of distant childhood events or even other socio-cultural conditions (e.g., neighborhood poverty). To be sure, those factors may have minor-modest effects, but educators can do better.

Starting with FBAs

Educators can generate useful hypotheses about the causes of misbehavior by simply examining the environments where it occurs and doesn’t occur. They follow the general outline in a preceding posts to generate hypotheses about the causes of the misbehavior. If Behavior Problem M occurs under certain circumstances but not under other circumstances, that constitutes pretty strong evidence that those circumstance influence the occurrence of the behavior.

One obvious consequence of generating hypotheses about why misbehavior occurs would be to test those hypotheses. Said another way, educators must focus on modifying the environments in which behavior occurs by using the mechanisms of science.

Educators' should devote their efforts to changing classroom, hallway, and other school environments so that behavior changes (B <==> E). We may want to eliminate poverty, discrimination (racial, sexual), violence, and more (I do!), but we don’t have control of the levers (other than our votes) to make grand changes like that.

Educators’ best shot at doing good is to teach effectively. We can’t pass out $1000/month or implore people of a nation to treat each other equitably and peacefully, but we can teach students so that they have a better chance to make more $$ in the job market and they have lots of practice at treating classmates of different ethnic backgrounds, different gender, different sexual orientation with consideration and kindness.

Generating hypotheses about proximal causes is understandable, because we know that behavior and environment are locked in an eternal dance. And, we know that FBAs can help us generate those hypotheses.

To generate hypotheses about causes of (mis-)behavior, educators can systematically analyze evidence about the conditions when the behavior occurs (antecedents) and the conditions following the behavior occurs (consequences). That is, we can record the As, Bs, and Cs. This was the topic of the #5 post in the series.

Why test hypotheses

But, we have to remember that FBAs only give us hypotheses. If educators want to be dang certain that they have identified the cause of a behavior, they need to test their hypotheses. Just saying, “Oh, this is our idea, and we believe it’s true” is insufficient. If the hypothesis is mistaken, you’re wasting a lot of your own time and, especially importantly, the learner’s time!

So, we need to conduct a “functional analysis” (FA, or “function analysis” or sometimes “standard functional analysis”). An FA differs from a simple FBA. The latter is descriptive, but the former is empirical.

If you’ve heard about FA with regard to “challenging behavior” for individuals with autism, you’re ahead of the game. It’s important that readers not associate FA only with autism or students with severe disabilities, though. Educators can employ the same technology to test hypotheses about behavior problems of individuals with emotional and behavior problems (Kern et al., 1994), academic issues (McKenna et al., 2015), and so forth.

How to test hypotheses

Testing FBA-based hypotheses is not difficult. It requires some simple objective observations and systematic arrangement of conditions (i.e., environments!). To be sure, it requires deference to systematically collected data about behavior, not to one’s personal impressions.

The logic is pretty simple:

We collect systematic observations of the behavior of concern (“self-stimming,” “throwing a tantrum when given an assignment,” or “saying antagonizing things to peers”) over various conditions. The conditions would include

Environment 1, in which (according to an FBA, for example) the behavior will *not* occur and

Environment 2, in which the FBA hypothesis is that the behavior *will* occur.

We test these two conditions carefully (keeping other featutes constant). The observational data wil tell the story!

There are two possible outcomes:

Oops...something went wrong? The problem behavior occurred in both Environment 1 and 2? If the behavior occurs more frequently (intensely, for a longer time, etc.) under the environment that our FBA hypothesis did not predict, we might be disappointed. But all is not lost; we are not hosed. We learned a lot! Our prediction got it wrong! It’s not the fault of the child! There’s no need to go searching for some “deeper,” “hidden” psychological reason for the behavior. So, be it! Or prediction was wrong. But, we learned when the behavior actually occurs! It looks like the behavior is more likely under a different environment. Using those data, we can build a better hypothesis for the cause and test it…leading to a better plan for the student.

Yay...the hypothesis was right Often, FAs lead to confirmation of hypotheses when people conducted FBAs. Yay! We got it right!

Educators learned that “given X environment-conditions, the behavior is more likely to occur, but given Y environment-conditions, it is less likely to occur.” Now the educators have serious hints about how to modify environments so that the problem behavior is less likely to occur! On the basis of these results, they can employ a plan to improve behavior...a BIP!

Examples

In this section, I’ll summarize three studies illustrating the FBA-FA sequence of identifying causes of behavior.

Kern et al. (1994)

Kern and colleagues (1994) worked with Eddie, 11-year-old who was quite bright but who’s behavior led to his schooling in a program for students with Emotional and Behavioral Disorders. Eddie switched from one 45-min class period to another, each focused on different subject (English, math, and spelling). He had problem behaviors (tantrums, self-injury, failing to complete work) in each of them.

The researchers collected data about on-task behavior in each class setting as a means of evaluating alternative hypotheses and assessing whether the FBA-FA process worked. To collect the data, independent observers who had been trained to report accurately, observed Eddie daily for 30 minutes in each situation.

Initially, for their FBA, Kern et al. conducted ABC assessment. They also interviewed teachers and Eddie himself. They quickly developed a hypothesis that misbehavior occurred when there were demands for academic behavior; Eddie’s behavior functioned to get him out of performing the required work—he was escaping it.

They learned that there seemed to be other conditions that mattered (e.g., whether tasks required written work, how long the assignments were, whether Eddie work in a study carrell, etc.). So they conducted what we’re calling an FA. That is, they then assessed Eddie’s on-task behavior under multiple different conditions. Some hypotheses were confirmed by the FA.

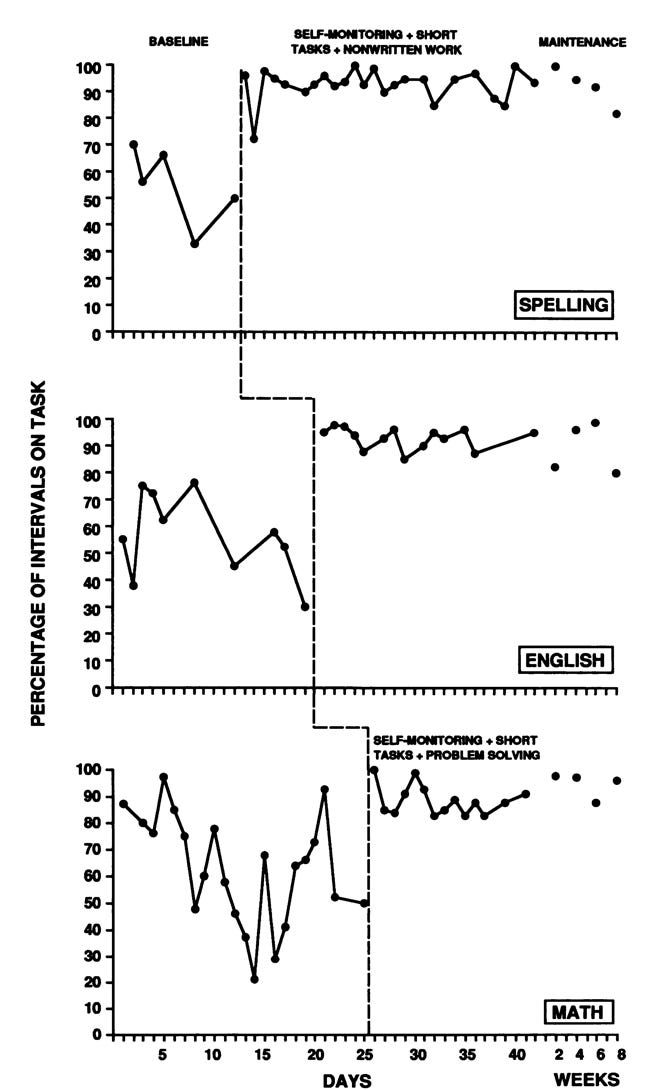

So, the next step was to develop a plan (often called a “behavior intervention plan” or “BIP”) for using what they’d learned from the FBA and FA to create environments that would lead to better performance for Eddie. The teachers and researchers implemented variations on the plan depending on the class and tasks, but the trained observers assessed on-task behavior across the situations. Here is a graph showing what happened with Eddie’s attention to task during baseline conditions and when the various BIPs were put into place.

Pretty clearly, the BIP changed Eddie’s behavior. Read the entire report of the study. It’s free and it’s linked in the sources (Kern et al., 1994).

Clark et al. (2002)

Although they used slightly different language to refer to aspects of their work, Clark and colleagues (2002) followed a process similar to the one that Kern et al. (1994) employed. They worked with a 12-year-old student named Mindy. Mindy was new to the school, lived with her Polish-speaking family, and had severe Intellectual Disability as well as autism spectrum disorder. When Mindy was given requests, she often “engaged in self-injurious behavior (biting), physical resistance, aggression, property destruction, and screaming” (p. 132).

Mindy’s teacher and the researchers, working as a team, reviewed archival records, conducted interviews, and directly observed Mindy’s behavior. Their assessment revealed that the problem behavior occurred “primarily during daily school preacademic activities and transitions” (p. 133). They hypothesized that Mindy’s “problem behaviors were motivated primarily by escape” (p. 133). When she acted up, she got out of the aversive situation.

The research team devised plans for intervention. For example, for conditions where Mindy was to complete a puzzle, they provided a similar task, rotated materials, made the materials more appealing, and provided enthusiastic praise. For tasks requiring Mindy to assemble pegboard forms onto color-matching areas, they provided a similar task, introduced more functionally relevant activities (e.g., assembling a MacDonald’s meal pack), and etc. In addition to these two modifications, they made five others.

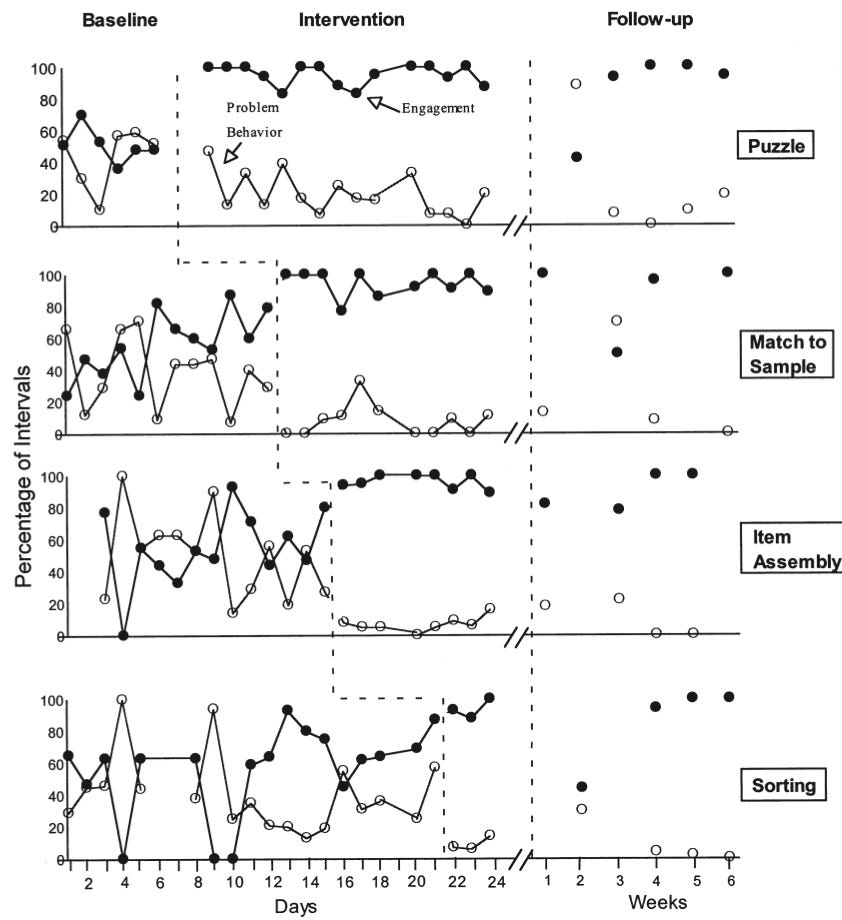

The next figure shows the effects of the Clark et al. BIP on Mindy’s engagement and problem behavior. Not only did problem behavior decrease and engagement increase, but the effects lasted for many weeks (actually into a new school year).

Guess what! They got it right and the result was an improved life for Mindy and an easier teaching situation for her teachers.

Haydon, 2012

Haydon (2012) examined the effects of modifying the difficulty of tasks assigned to a student on (a) attending to task, (b) disruptive behavior, and (c) accurate responding. The behavior of Mikey, a 5th-grade boy identified as having learning disability, was the focus of this case study. During math seatwork, Mikey would make noises, laugh, sing, kick his chair, talk to or tap on neighboring students, and even walk out of the room. In addition, he would only answer only about 20% of the seatwork items correctly.

Haydon interviewed Mikey’s general and special education teachers, conducted ABC observations, and analyzed Mikey’s work sheets. As a team, they hypothesized that Mikey was using these behaviors to escape having to work on his assignments during math seatwork.

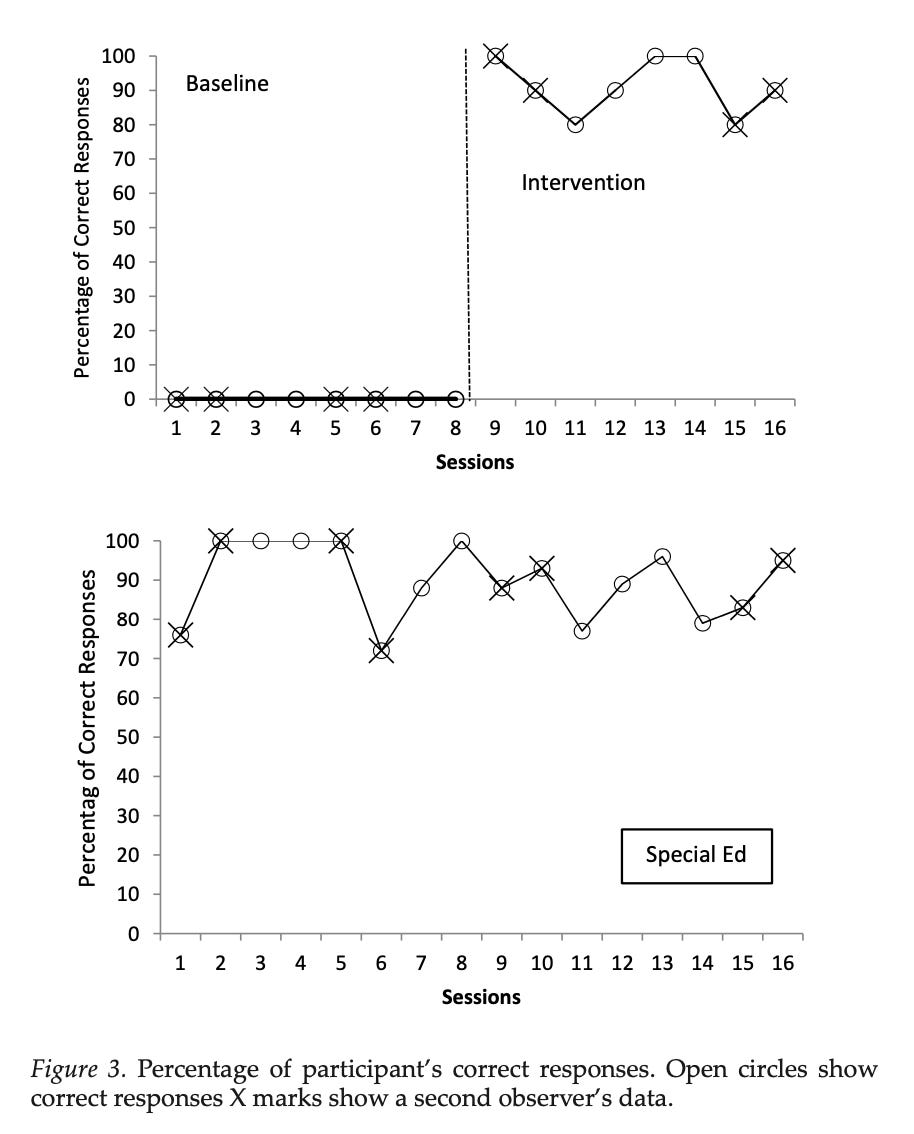

Pre-service teacher education students observed Mikey’s behavior in both the general education and special education setting. First, they observed under baseline conditions and then they continued to observe when the general education teacher implemented a plan: Provide Mikey assignments that were consistent with his skill level, as revealed by the analysis of the errors on his work sheets.

The accompanying graph shows what happened with one of the three measures that Haydon (2012) reported. As the bottom part of the figure shows, Mikey rarely got fewer than 80% of the math assignments correct in the special education setting. The upper part shows, however, that he was getting 0% correct in the general education setting until the assignments were changed to match his skill level; then his work improved dramatically.

Similar substantial improvements occurred for on-task and disruptive behavior. So, Haydon and the teachers figured out the function of Mikey’s problems and, by changing the environment, helped him work better.

Failures?

To be sure, the FBA-FA-BIP process can fail. There are many possible reasons things might not go as we would hope, and there are practical guidelines for how to avoid or address those failures (e.g., Hirsch et al. 2017).

Sometimes behavior is a function of multiple conditions. When this occurs, the FA is more complicated, but the basic process can still work.

Conceptually, to understand failures in BIPs educators must continue to analyze the behavior-environment situation! What’s working? When is it failing? Which of the reasons Hirsch et al. identified are likely the cause? Is it something else?

Additional reflections

Once people learned about examining the function of behavior (thanks to Ted Carr, 1977), there have been hundreds of studies employing pretty much the combination of FBAs, FAs, and BIPs described in this post. In fact, Hanley and colleagues (2003) identified nearly 300 studies prior to the year 2000 and Beavers et all (2013) found more than 150 between 2001 and 2012. That amount of work encourages me to call the process “evidence based.” The process has been the source of many valuable advancements in different areas (e.g., autism; Leaf et al. 2021), including schools (see Mueller et al., 2013).

There are many other valuable resources about FBA-FA-BIP in special education. Take a look at Lane et al. (2009) for a great how-to overview. For a brief text that can help one explain the process to assistants, see Bateman and Golly (2011). Read Gable et al.’s (2014) recommendations. This technology has matured, and it will continue to evolve toward greater effectiveness.

And understanding causes using FBAs and FAs will help educators not just to understand causes, but also to create beneficial environments for learning. That is, by creating these beneficial, effective environments, educators will help themselves as well as their students.

Importantly, also, please don’t think of the FBA-(FA)-BIP process as a US-centric approach. There are plenty of examples from different cultures (e.g., Marharmeh, 2019). In fact, I wonder if the logic could be applied to the misbehavior we usually call “war.”

Flip the script

Last point: What if we flip the script and analyze correct behavior, not misbehavior? What if we collect data about when learners “get things right,” In what environments do kids learn? Might we realize that they do so when we educators use Procedures X, Y, and Z—but not procedures M, N, and P?

Well, Papal smoke! I bet we’d be talking about about effective instruction! It’d be about creating environments for effective learning!

So, the take-aways

Understanding the functions of behavior is powerful. The FBA-FA-BIP process is personalized; it’s different for each individual and situation. Educators can determine functions pretty readily, using FBAs and FAs. These data can not only explain the causes of misbehavior, but also provide direction for how to help learners by creating BIPs, thus overcoming their problems. That’s why they should be a part of sensible IEPs.

Sources

Bateman, B. D., & Golly, A. (2011). Why Johnny doesn’t behave: Twenty tips and measurable BIPs. Attainment.

Beavers, G. A., Iwata, B. A., & Lerman, D. C. (2013). Thirty years of research on the functional analysis of problem behavior. Journal of Applied Behavior Analysis, 46(1), 1–21. https://doi.org/10.1002/jaba.30

Blair, K. S. C., Umbreit, J., Dunlap, G., & Jung, G. (2007). Promoting inclusion and peer participation through assessment- based intervention. Topics in Early Childhood Special Education, 27(3), 134-147. https://doi.org/10.1177/02711214070270030401

Carr, E. G. (1977). The motivation of self-injurious behavior: A review of some hypotheses. Psychological Bulletin, 54, 800–816. https://doi.org/10.1037/0033-2909.84.4.800

Clarke, S., Worcester, J., Dunlap, G., Murray, M., & Bradley-Klug, K. (2002). Using multiple measures to evaluate positive behavior support: A case example. Journal of Positive Behavior Interventions, 4(3), 131-145. https://doi.org/10.1177/10983007020040030201

Gable, R. A., Park, K. L., & Scott, T. M. (2014). Functional behavioral assessment and students at risk for or with emotional disabilities: Current issues and considerations. Education and Treatment of Children, 37, 111–135. https://doi.org/10.1353/etc.2014.0011

Hanley, G. P., Iwata, B. A., & McCord, B. E. (2003). Functional analysis of problem behavior: A review. Journal of Applied Behavior Analysis, 36, 147–185. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1284431/pdf/12858983.pdf

Haydon, T. (2012). Using functional behavior assessment to match task difficulty for a 5th grade student: A case study. Education and Treatment of Children, 35(3), 459-476. https://doi.org/10.1353/etc.2012.0019

Hirsch, S. E., Bruhn, A. L., Lloyd, J. W., & Katsiyannis, A. (2017). FBAs and BIPs: Avoiding and addressing four common challenges related to fidelity. Teaching Exceptional Children, 49(6), 369-379. https://doi.org/10.1177/0040059917711696

Kern, L., Childs, K. E., Dunlap, G., Clarke, S., & Falk, G. D. (1994). Using assessment- based curricular intervention to improve the classroom behavior of a student with emotional and behavioral challenges. Journal of Applied Behavior Analysis, 27(1), 7-19. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1297773/

Kodak, T., Fisher, W. W., Clements, A., Paden, A. R., & Dickes, N. R. (2011). Functional assessment of instructional variables: Linking assessment and treatment. Research in Autism Spectrum Disorders, 5(3), 1059-1077. https://doi.org/10.1016/j.rasd.2010.11.012

Lane, K. L., Bruhn, A. L., Crnobori, M., & Sewell, A. L. (2009). Designing functional assessment-based interventions using a systematic approach: A promising practice for supporting challenging behavior. In T. E. Scruggs, & M. A. Mastropieri, (Eds.), Advances in learning and behavioral disabilities: Vol. 22. Policy and practice (pp. 341–370). Emerald.

Leaf, J. B., Cihon, J. H., Ferguson, J. L., Milne, C. M., Leaf, R., & McEachin, J. (2021). Advances in our understanding of behavioral intervention: 1980 to 2020 for individuals diagnosed with autism spectrum disorder. Journal of Autism and Developmental Disorders, 51, 4395–4410. https://doi.org/10.1007/s10803-020-04481-9

Maharmeh, L. M., Homedan, N. S., & Aleid, W. A. (2019). Reducing the rate of behavioral problems for students with ASD & ADHD using the techniques of FBA. International Journal for Research in Education, 43(2). https://scholarworks.uaeu.ac.ae/ijre/vol43/iss2/9

McKenna, J. W., Flower, A., Kim, M. K., Ciullo, S., & Haring, C. (2015). A systematic review of function‐based interventions for students with learning disabilities. Learning Disabilities Research & Practice, 30(1), 15-28. https://doi.org/10.1111/ldrp.12049

Mueller, M. M., Nkosi, A., & Hine, J. F. (2013). Functional analysis in public schools: A summary of 90 functional analyses. Journal of Applied Behavior Analyses, 44(4), 807-818. https://doi.org/10.1901/jaba.2011.44-807

For further reading, there's a very clear and accurate discussion of functional assessment over on ASAT:

LaRue, R. (2021). Clinical Corner: When should a functional analysis be done and who should do it? Science in Autism Treatment, 18(12). https://asatonline.org/research-treatment/clinical-corner/functional-analysis/